You walk into a room and you know something is wrong.

Nobody told you. Nobody gave you a signal. You didn't run an analysis. You walked through a door and your body said wrong before your brain could form a sentence about it. Your shoulders tightened. Your breathing changed. Something in you measured the room and returned a verdict — and you didn't ask it to.

That's not instinct. Instinct tells you to duck when something flies at your head. This is different. This is a moral evaluation that happened below the level of conscious thought, faster than language, and with a confidence that you couldn't override even if you wanted to.

You are running a judgment layer. Right now. Continuously. And you didn't install it.

Let me show you how deep this goes.

In the 1990s, a neuroscientist named Antonio Damasio studied patients with damage to a specific brain region — the ventromedial prefrontal cortex. These patients were fascinating. Their intelligence was intact. Their memory was intact. They could describe moral rules perfectly — murder is wrong, stealing is wrong, lying is wrong. They knew the rules. They could articulate them, debate them, apply them to hypothetical scenarios with flawless logic.

But they couldn't feel them.

When confronted with a moral violation — real or hypothetical — the normal emotional response was absent. The gut-level "that's wrong" signal didn't fire. And what happened next is the part that should stop you: these patients made catastrophic life decisions. They lied without discomfort. They manipulated without guilt. They destroyed relationships with no felt consequence. Not because they didn't know better — they did. But the knowing wasn't connected to anything. The signal was disconnected from the speaker.

Damasio called these "somatic markers" — bodily signals that tag decisions with emotional weight before conscious reasoning kicks in. The moral evaluation happens in the body first and reaches the mind second. It's not an opinion. It's a measurement. And when you knock out the hardware that runs it, the person still knows the rules but can't follow them.

That's a hardware/software distinction. The capacity to know what's right is software — you can learn it, articulate it, teach it. The capacity to feel that something is wrong is hardware. It comes pre-installed. And when the hardware is damaged, the software alone is not enough.

Now go younger. Way younger.

Developmental psychologists at Yale's Infant Cognition Center — the Baby Lab — have been running experiments on pre-verbal infants for years. Babies. Six months old. Before language. Before culture. Before anyone has taught them a single rule.

Here's what they found. Show a baby a puppet show. One puppet helps another puppet up a hill. A different puppet pushes the climber back down. Then offer the baby both puppets. The baby reaches for the helper. Consistently. Across cultures, across demographics, across every variable they've tested.

Six-month-old humans — who can't speak, can't reason abstractly, haven't been to Sunday school or read a philosophy textbook — are making moral evaluations. They prefer the helper. They avoid the harmer. They are sorting agents into categories based on moral behavior before they can say the word "moral."

That's not learned. You can't learn something before you have the cognitive architecture to learn with. That's not cultural. These babies haven't absorbed any culture yet — they can barely focus their eyes. That's pre-installed. That's firmware.

And it gets more specific. Older infants — around twelve to fifteen months — don't just prefer helpers. They expect fairness. Show them an unequal distribution of resources and they register surprise. They stare longer at unfair outcomes than fair ones, which is how developmental psychologists measure expectation violation in pre-verbal subjects. These tiny humans expect the world to be fair before anyone has told them it should be.

Now zoom out to the species level.

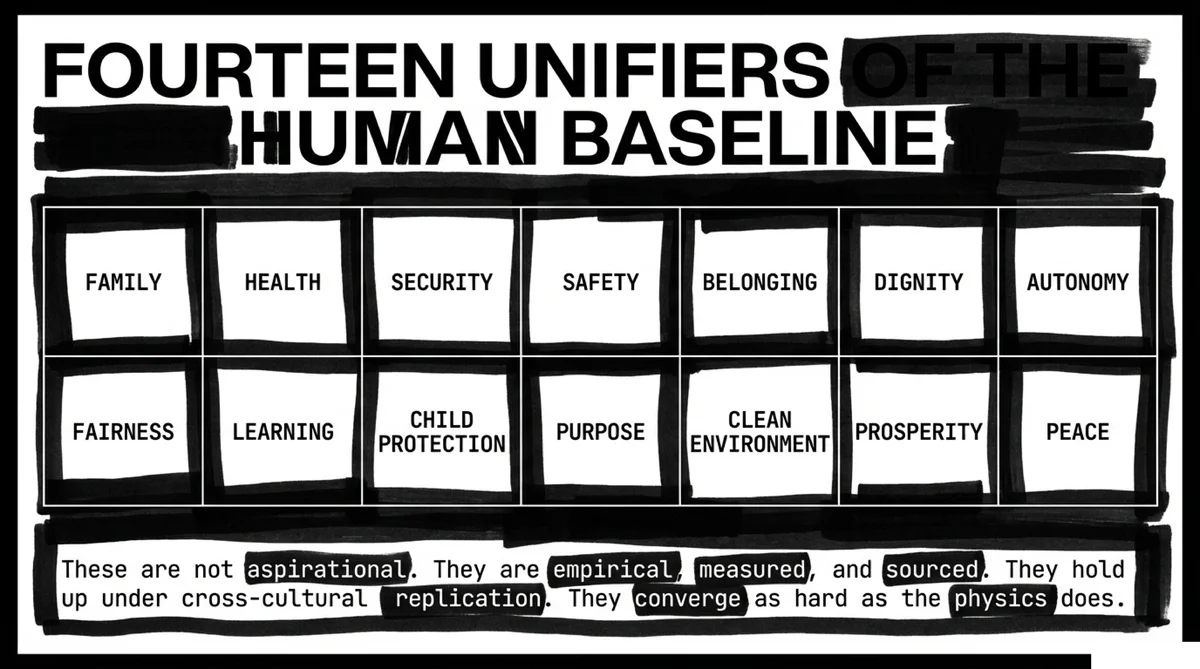

Anthropologists have spent decades cataloguing moral systems across every culture they can find — isolated tribes, modern cities, ancient civilizations, hunter-gatherers, agricultural societies, industrialized nations. The surface rules vary enormously. What you can eat, who you can marry, how you greet a stranger — all of that is cultural. It changes from place to place and century to century.

But underneath the surface rules, a small set of moral foundations shows up everywhere. Care for the vulnerable. Fairness in exchange. Loyalty to the group. Respect for authority. A concept of purity or sanctity. And a sense — however articulated — that certain things are simply wrong regardless of who does them or what the local rules say.

Not similar. Universal. Every culture. Every era. Every continent. Isolated groups with no contact with each other develop the same moral architecture independently.

The naturalist explanation for this is evolutionary. Morality is adaptive behavior — cooperating groups outperform selfish ones, so natural selection wired us for moral intuition because it improved survival odds. And that explanation works for the social layer. It makes sense that cooperation would be selected for. It makes sense that fairness would stabilize groups. It makes sense that care for offspring would propagate genes.

But here's where it breaks down. The evolutionary explanation accounts for moral behavior but not moral experience. It explains why we cooperate — survival — but not why we feel guilty when we don't. It explains why cheating is punished — group stability — but not why we experience an internal violation when we cheat even if no one will ever find out. It explains why we evolved to help each other — reproductive advantage — but not why a soldier throws himself on a grenade to save strangers he met three weeks ago.

The experience is the problem. The qualia of moral judgment — the felt wrongness, the guilt, the discomfort, the sense of violation — is not explained by survival advantage. A robot programmed to cooperate would cooperate without feeling anything. Evolution needed cooperation, not conscience. The conscience is extra. And "extra" demands an explanation.

Stay with me. This is the one worth sitting with.

We've got three data streams now. Neuroscience says the moral evaluator is hardware — damage it and the person can articulate morality but can't follow it. Developmental psychology says it's pre-installed — infants judge before they learn. Anthropology says it's universal — every culture, every era, same foundations.

Hardware. Pre-installed. Universal.

That's not a learned behavior. That's not a cultural product. That's not an emergent property of social dynamics. That's architecture. Someone designed the observer with a measurement apparatus already inside it.

And here's the thread that closes the circuit.

Two thousand years ago, a man named Paul wrote a letter to a church in Rome. He had never met most of them. He was writing to explain how God's moral standard applies to people who have never read the Torah — people who don't have the written law. And he made a claim that, when you read it now, sounds like he's describing the neuroscience.

"They show that the work of the law is written on their hearts, their conscience bearing witness, and their conflicting thoughts accusing or else defending them."

Romans 2:15.

Written on their hearts. Not taught. Not acquired. Written. Pre-installed. The conscience — bearing witness. Running a continuous evaluation. And their conflicting thoughts — accusing or defending. The internal courtroom that every human operates, awake or asleep, wanted or not.

Paul described the judgment layer. He described it as hardware. He described it as universal. He described it as a moral measurement apparatus that operates independently of whether the person has received external instruction. And he did it two thousand years before Damasio mapped the ventromedial prefrontal cortex, before the Yale Baby Lab showed that six-month-olds prefer helpers over harmers, and before cross-cultural anthropology confirmed the same moral foundations on every continent.

That's the same pattern we saw in Chapter Two. The text predicts the science. The gap is two millennia.

Here's what this means for the argument.

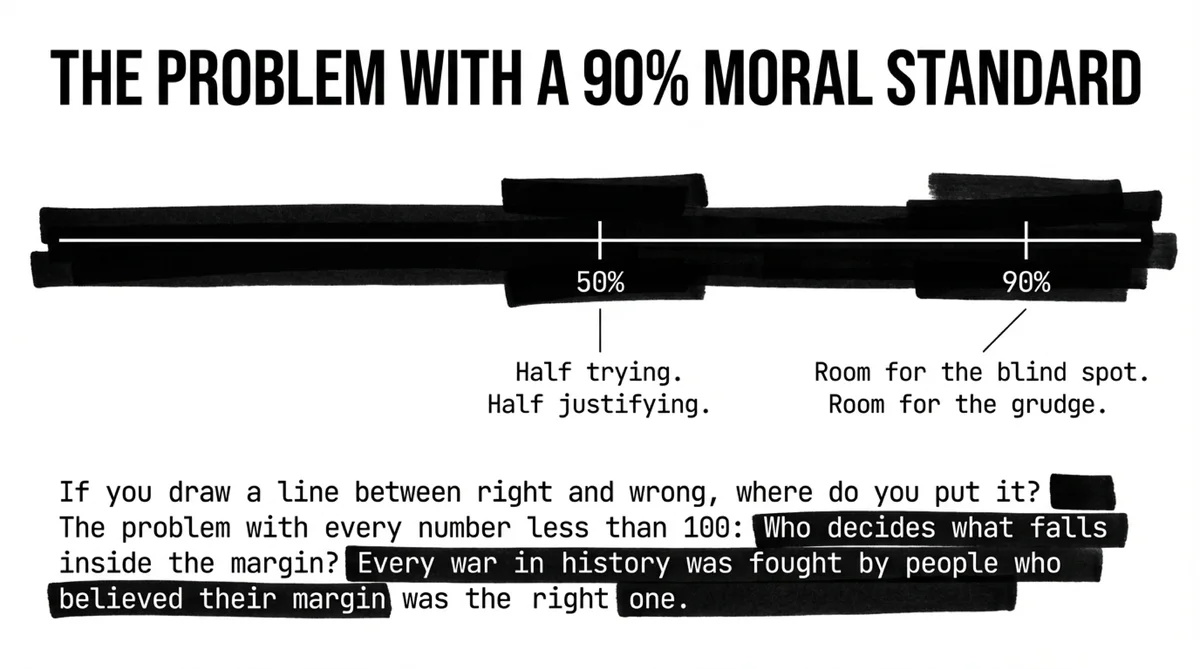

If every human being comes equipped with a moral evaluator they didn't choose, can't uninstall, and can't override even when they want to — then accountability isn't what most people think it is.

Accountability isn't God watching you from the outside and keeping a list. That's the cartoon version. What the data suggests is something deeper and more unsettling.

God wrote the measurement apparatus into the observer.

You're not being judged by someone else. You're being judged by the equipment you came with. The verdict is being generated inside you, in real time, every moment of every day. You feel it when you lie and no one catches you. You feel it when you do the right thing and no one notices. You feel it when you look away from something you should have confronted.

That's not culture. That's not upbringing. That's not social conditioning. That's the law written on your heart, your conscience bearing witness.

And the fact that you can't turn it off — the fact that damaged hardware still leaves the rules intact even when the felt weight disappears — tells you something about the Architect. He didn't give you a suggestion. He gave you a measuring instrument. And instruments don't care whether you like the reading.

So now we have five data points in the series.

The playing field is level — both systems stand on unprovable axioms. The evidence runs both directions — fifteen predictions forward, ten gaps backward. Math works because the Author of logic and the Author of reality are the same. Science converges rather than accumulates — and the convergence points toward a coherent structure behind the models. And every human being carries a moral measurement apparatus they didn't install.

One question remains before the verdict, and it's the simplest one. The one a child could ask.

Is the thing at the bottom of reality good — or isn't it?